Azure Files is essentially a fully managed serverless file share hosted on Azure accessible via the industry standard protocols like SMB, NFS and Azure Files REST API.

Azure file shares can be mounted concurrently by cloud or on-premises deployments and they are extremely useful for replacing or supplementing on-premise file servers. In Dynamics 365 Business Central SaaS scenarios, I think this is a great service to use if you want to work with files and provide to your users an experience like working with files locally.

I described in the past a solution for using Azure File shares with Dynamics 365 Business Central here and I know that some partners started to use this service. But if you want to have a powerful file service in the cloud, I think there are some best practices and personal recommendations to share and evaluate (unless you have particular requirements for your project).

The first recommendation is related to the storage account type. When you create a storage account via the Azure Portal, as default this is created as a Standard performance tier (GPv2 now). Azure file share stores GPv2 storage accounts data on hard disk drive-based (HDD-based) hardware. In addition to storing Azure file shares, GPv2 storage accounts can store also other storage resources such as blob containers, queues, or tables.

If you want better performances and high throughput for your files, you can select the Premium tier and here select File shares as account type:

This type of performance tier (named FileStorage storage account) stores files on SSD (FileStorage accounts can only be used to store Azure file shares, no other resources like blob containers, queues, tables are permitted). All premium file shares can scale up to 100 TiB by default.

In a storage account you can use different storage services (blob containers, tables, file shares). All storage services in a single storage account share the same storage account limits and mixing storage services in the same storage account make it more difficult to troubleshoot performance issues. It’s recommended to deploy each Azure file share in its own separate storage account to avoid limitations.

In the Advanced section, there’s an important setting called Enable large file shares. A single standard file share in a general purpose account can now support up to 100 TiB capacity, 10K IOPS, and 300 MiB/s throughput. Default is 5TiB. All premium file shares in Azure FileStorage accounts currently support large file shares by default. If you’re using a standard file share and you need more than 5TiB of files, you need to enable this flag:

Please remember that for premium file shares, quota means provisioned size. The provisioned size is the amount that you will be billed for, regardless of actual usage. Maximum size of a file in a file share is 1 TiB, and there’s no limit on the number of files in a file share.

The Data protection section allows you to configure the soft-delete policy for Azure file shares (useful for easily recover your data when it’s mistakenly deleted by an application or other storage account user). Please remember to set the desired number of days that a file share marked for deletion persists until it’s permanently deleted:

Common question that many partners raise here is: should I use Standard tier (GPv2) or Premium tier for storing my files in Dynamics 365 Business Central projects?

Azure Premium Storage offers lower latency, compared to Standard Storage, because it is based on faster SSDs. For blob storage, Azure advertises latency of single-digit milliseconds for Premium Storage, and milliseconds for Standard Storage (hot or cool tier).

Question that you should ask yourself is: do I really need a very big throughput and very low latency on files for my project? Standard tier (GPv2) in a classic ERP project guarantees very good performances in my opinion.

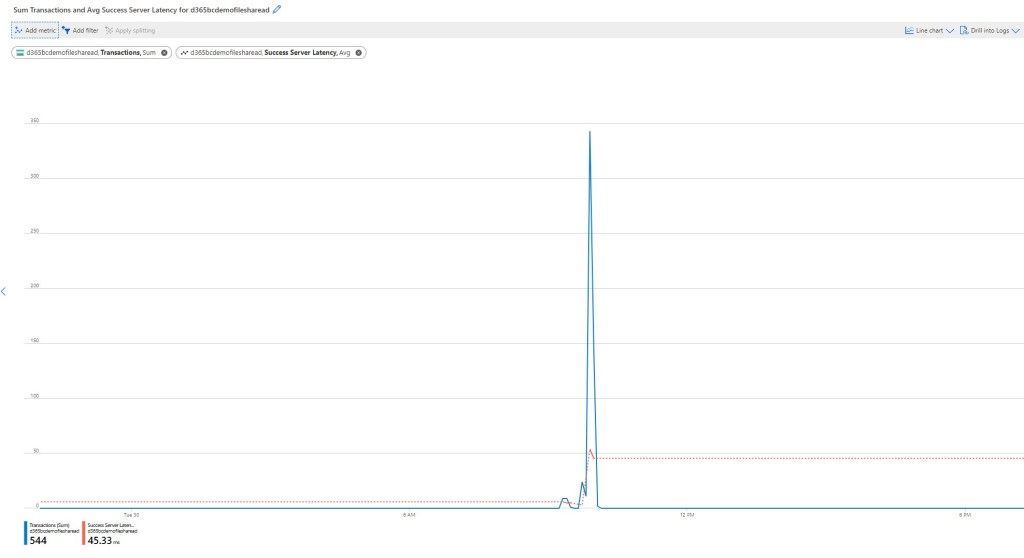

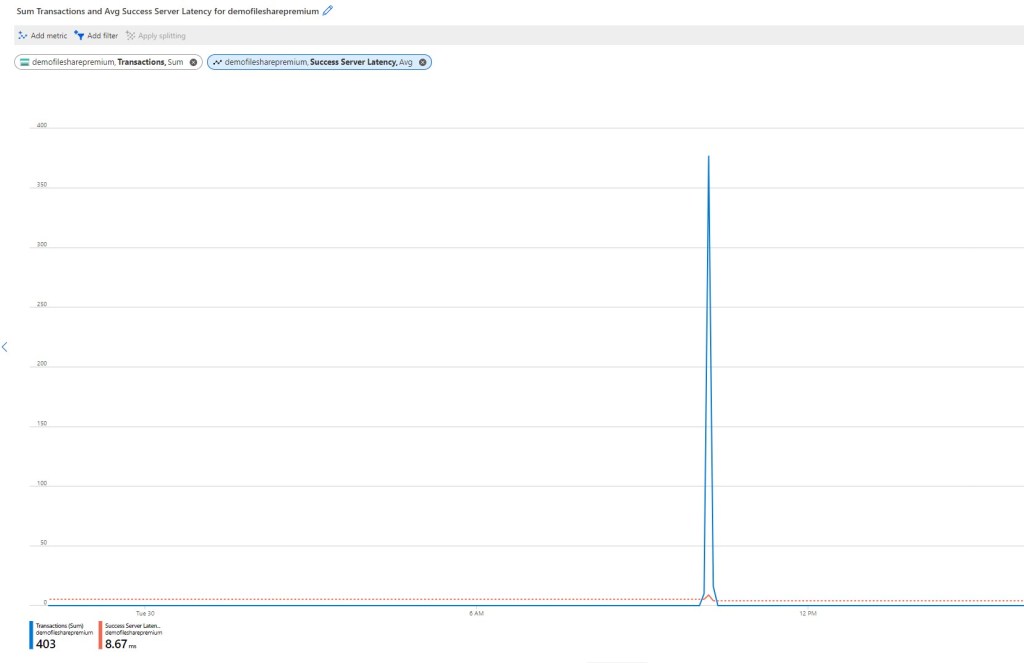

To give you a real benchmark, I’ve created two file shares, one in a Standard (GPv2) storage account and one in a Premium (FileStorage) storage account:

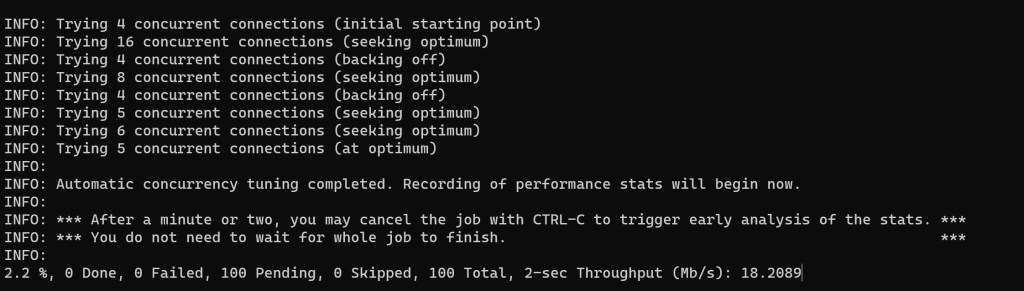

Inside these storage accounts I’ve created a file share and I’ve performed some stress tests by using the AzCopy tool. AzCopy has a feature (currently in preview) that permits you to execute performance benchmarks on a storage account container by uploading or downloading test data to or from a specified destination.

You can execute a performance benchmark test on a file share by executing AzCopy from a command prompt with the following parameters:

azcopy bench "https://[account].file.core.windows.net/[container]?<SAS>

where <SAS> is a Shared Access Signature that you need to create in order to access your container.

This command performs an upload benchmark with default parameters. Transferred data are deleted at the end of the test run. Benchmark mode will automatically tune itself to the number of parallel TCP connections that gives the maximum throughput:

The benchmark executed on the Standard file gives the following result:

The benchmark executed on the Premium file gives the following result:

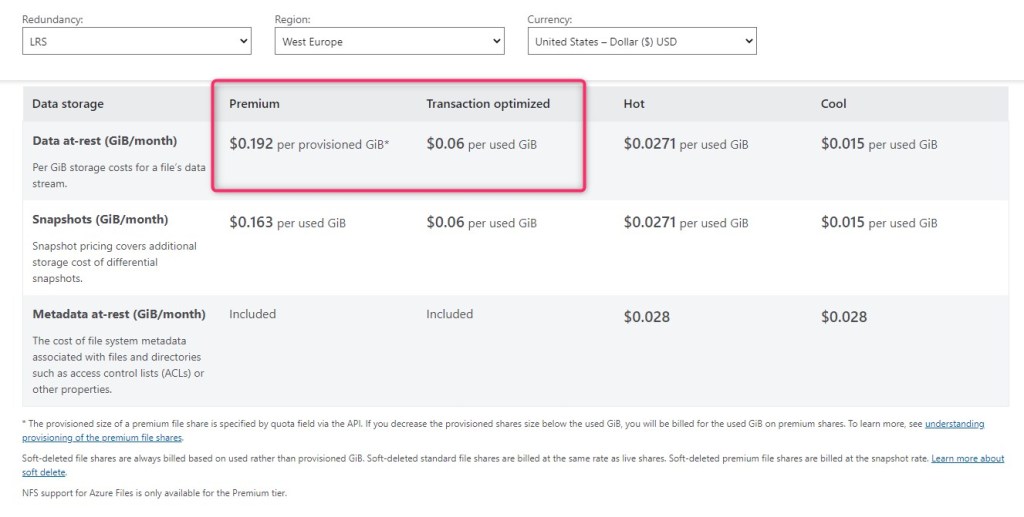

As you can see, the Premium tier guarantees a very low latency (average 8ms) compared to the Standard tier (average 45ms). But the Standard tier latency is absolutey great with this number of transactions per minute (100 files, 1Mb each). Do you really need the Premium tier? Remember that you can save money with the Standard tier (pricing at the time of writing this post is the following):

With AzCopy you can also create various tests by selecting the file size and the number of files to upload. For example, the following command runs a benchmark test that uploads 500 files, each 2 Mb in size:

azcopy bench "https://[account].file.core.windows.net/[container]?<SAS>" --file-count 500 --size-per-file 2M

I suggest to perform benchmarks in order to evaluate what tier you need for your storage account if you have strict latency requirements, otherwise the Standard tier (GPv2) is absolutely a good and money-saving choice.

Remember that at the moment you cannot directly convert a Standard file share in a Premium file share. If you want to switch from Standard to Premium tier, you need to create a new file share and then copy the data from the old share to the new one. You can use the AzCopy tool for that (azcopy copy command).

Please remember also that with Azure Files AD Authentication, Azure file shares can work with Active Directory Domain Services (AD DS) hosted on-premises for access control. This means that company users can map an Azure file share storage using their Active Directory credentials and access that storage like a local drive.

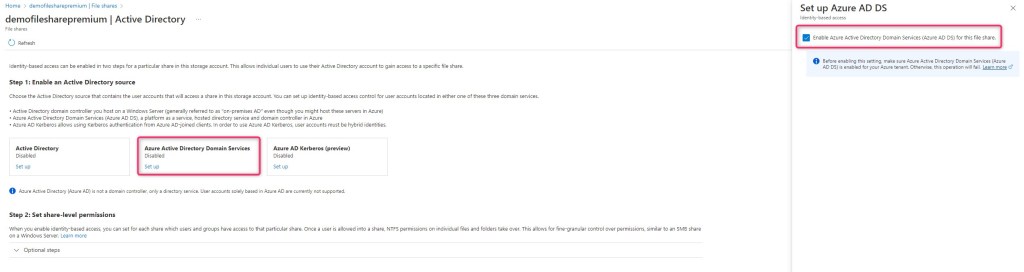

To enable Azure AD DS authentication over SMB for Azure Files, you can set a property on storage accounts by using the Azure portal, Azure PowerShell, or Azure CLI. Setting this property implicitly “domain joins” the storage account with the associated Azure AD DS deployment. Azure AD DS authentication over SMB is then enabled for all new and existing file shares in the storage account.

In your Storage Account File shares section, select Active directory and click on Not configured:

In the new opened page, select Azure Active Directory Domain Services then switch the toggle to Enabled and click Save:

If you want to provide to your users a smooth file experience in the cloud, I recommend to give this service a try…