I received in the past lots of requests about how to use FTP in a SaaS environment (expecially with Dynamics 365 Business Central) and I’ve also blogged about different possible solutions (just search for FTP word on this blog).

But now, there’s a new easy way to move files to Azure via SFTP: starting from few weeks, Azure Blob Storage supports SFTP. You can now securely connect to the Blob Storage endpoint of an Azure Storage account by using an SFTP client, and then upload and download files. Prior to the release of this feature, if you wanted to use SFTP to transfer data to Azure Blob Storage you would have to either purchase a third party product or orchestrate your own solution.

To support SFTP on azure Blob Storage, you need a standard general-purpose v2 or premium block blob storage account. If you’re connecting from an on-premises network, make sure that your client allows outgoing communication through port 22 used by SFTP.

Enabling SFTP on a storage account container

To support SFTP, when creating the storage account, you need to set the following options in the Advanced tab:

When the storage account is created, a new SFTP option appears in the Settings blade and here you can enable or disable SFTP supoort or add/disable SFTP users:

User account setup

To support SFTP, you need to add at least a local user. To add a user, click on Add local user and in the Add local user configuration pane, add the name of a user, and then select which methods of authentication you’d like associate with this local user. You can associate a password and/or an SSH key. If you select SSH Password, you need to provide user details and the password will appear at the end of the user’s configuration. If instead you select SSH Key pair, then select Public key source to specify a key source.

Here I’m creating a user (called sftpuser) with a password:

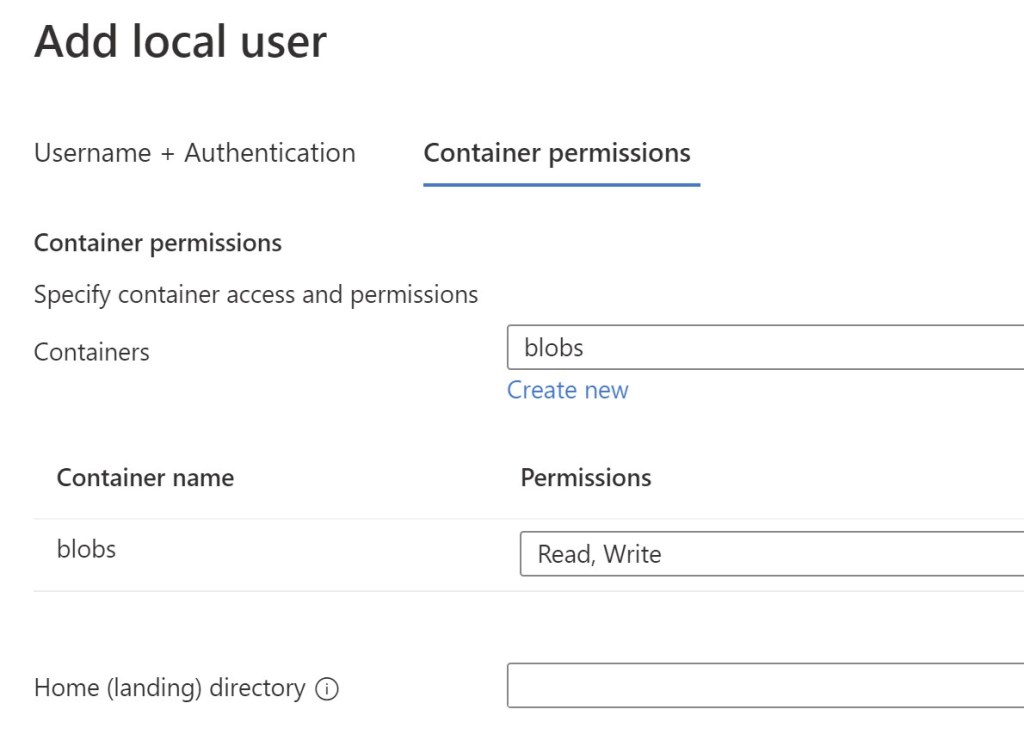

In the Container permission screen you need to specify the container inside your storage account that you want to give access to this user via SFTP and for the selected container you need to specify permissions (types of operations that you want to enable for this user):

In the Home (landing) directory field you can specify a “container/directory” path relative to the storage account to be considered as the landing directory for the user. Home directory is only the landing, or initial, directory that the connecting local user is placed in. Local users can navigate to any other path in the container they are connected to if they have the appropriate container permissions.

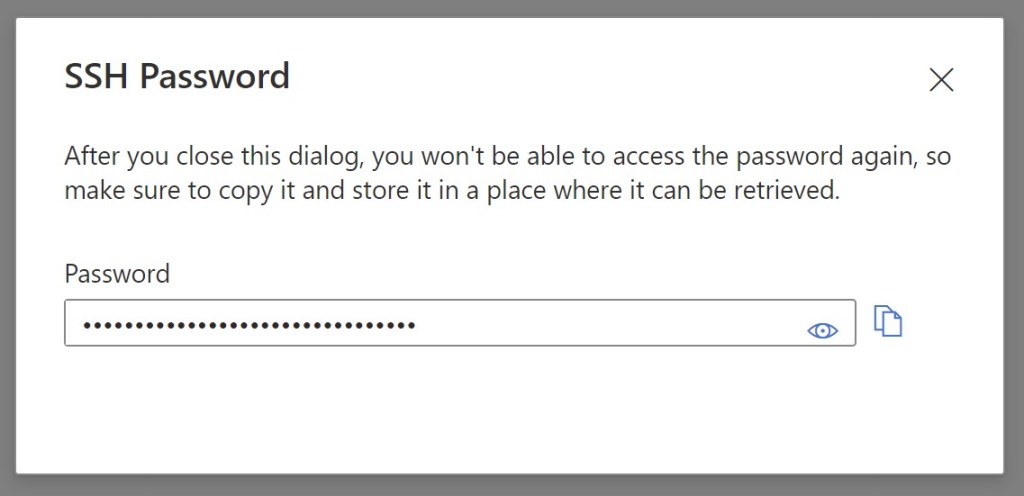

When done, a password is displayed for the newly created user:

Please remember that you can’t retrieve this password later, so make sure to copy the password and store it in a safer place.

The SFTP username is always in the storage_account_name.container_name.username format, so in my example above the username is blobstorageftp.blobs.sftpuser.

Data transfer

Now you’re ready to connect with your favourite SFTP client. As an example, here I’m connecting with FileZilla:

When connected. the user lands to the default folder specified in the Home (landing) directory field in the user configuration, so I’m my case it’s the container’s root folder:

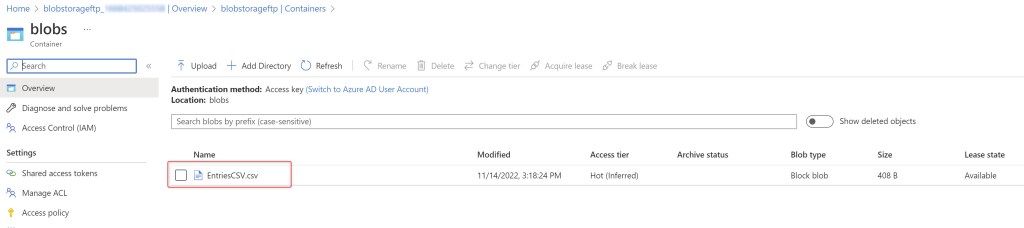

If you upload a file from your SFTP client (as an example I’m uploading a .CSV file):

the file is automatically uploaded into the Azure Blob Storage container:

Why this is an important feature for Dynamics 365 Business Central?

Because now you have a native way to handle SFTP data transfer to/from a Business Central SaaS environment directly from AL code and without always using other cloud services (like Logic Apps for example):

- if you want to transfer a file to Dynamics 365 Business Central via SFTP, just transfer it to the Azure Blob Storage container via SFTP and you can read this file via AL code.

- If you want to make Business central-generated files available to other applications via SFTP, just create a file into the Azure Blob Storage container (via AL code) and then the external appication can use SFTP to access it.

Obviously, you will continue to rely on Azure Logic Apps if you’re forced to use external SFTP servers.

Some important things to remember:

- You can authenticate local users connecting via SFTP by using a password or a Secure Shell (SSH) public-private keypair. Azure Blob Storage doesn’t support Azure Active Directory (Azure AD) authentication or authorization via SFTP. Instead, SFTP utilizes a new form of identity management called local users.

- Local users must use either a password or a Secure Shell (SSH) private key credential for authentication. You can have a maximum of 1000 local users for a storage account.

- When using SFTP, you may want to limit public access through configuration of a firewall, virtual network, or private endpoint. These settings are enforced at the application layer, which means they aren’t specific to SFTP and will impact connectivity to all Azure Storage Endpoints.

What about costs?

Enabling the SFTP endpoint has a cost of $0.30 per hour and these costs will start to be applied on or after December 1, 2022. Transaction, storage, and networking prices for the underlying storage account also applies.

$0.30 per hour is not a small cost and it can be huge if you have an SFTP account active on 365 days and 24 hours per day. I don’t recommend to handle this scenario with this feature, because in this case other FTP servers (VMs or external) are better in terms of cost.

If you’re using SFTP on Azure Blob Storage, I recommend to activate it only when needed. You can enable/disable the SFTP service via API calls or azure CLI:

$account = Set-AzStorageAccount -ResourceGroupName "myresourcegroup" -AccountName "mystorageaccount" -EnableSftp $false

Please also note that SFTP support for Azure Blob Storage at the time of writing this post is not yet generally available in the West Europe region.

at $0.30 an hour, it could be a costly affair

Approx. 8,760 hours in a year. This could result in an annual charge of $,2,628 for the SFTP option on top of any Storage Account related costs.

Do you think its a viable/cost-effective option that customers could implement?

LikeLike

Good catch. I agree, costs of having it always active are very high. In the scenario I had in mind SFTP should not be always active and you can disable it automatically via Powershell (Azure CLI) for example. In this case you will pay only for the real time where SFTP is active.

LikeLike