In the last weeks I had lots of requests and discussions by partners in the Dynamics community asking for a better way to handle the reliability of integrations between external applications and Dynamics 365 Business Central in a fully cloud world.

The scenario here is simple words is always the same: we have Dynamics 365 Business Central and we have an external application. These two worlds needs to communicate in a reliable way without losing transactions. These two worlds usually have different requirements and operational limits.

The classical configuration that I see on the 99% of the projects is represented in the following schema:

Here, we have Dynamics 365 Business Central sending transactions to the external application and the external applications sending transactions to Dynamics 365 Business Central. Both applications are using their own set of APIs or web services that they expose.

Questions raised were a lot and I think we can resume them with the following:

- Dynamics 365 Business Central has strict limits on API calls. How can I bypass that?

- My integration is not fully reliable. If a call to a Dynamics 365 Business Central API is lost, transmitted data is lost.

- How can I scale my integrations?

- How can I decouple my external system from Dynamics 365 Business Central? The two systems can have different requirements in terms of timeouts and so on.

- I’m using Business Central webhooks for integrations, but the transaction is not reliable because some notifications are lost due to API failures when called.

The scenario represented in the above schema works if both system exchanges a small number of transactions. As you can see from the schema, the two systems are closely related. What happens if the number of transactions grows? Or if one of the two systems need to send a large amount of transactions every second and the other is not able to react or handle this? Or if the process for handling a transaction in one of the two systems is complex and it requires times, so you start receiving timeouts?

Short answer: you start to have big problems and your integrations become unreliable.

When I see scenario like what is described above, I always suggest to decouple the two systems. Dynamics 365 Business Central should not directly talk with the external application and the external application should not directly call Dynamics 365 Business Central APIs. In the previous schema, you need something between the two main systems that acts as a “reliable message collector and dispatcher”. With something like that, you have two big advantages:

- Dynamics 365 Business Central can send a transaction to the external application without depending on it and on its operational limits.

- The external application can send a transaction to Dynamics 365 Business Central without depending on it and on its operational limits.

The new simple architectural schema for handling such scenarios could be the following:

The new piece of the SaaS architecture here represented is an Azure Queue.

Azure Queue Storage is a service for storing large numbers of messages. You access messages from anywhere in the world via authenticated calls using HTTP or HTTPS. A queue message can be up to 64 KB in size. A queue may contain millions of messages, up to the total capacity limit of a storage account. Queues are commonly used to create a backlog of work to process asynchronously.

Using an Azure queue is the most simple way in a serverless world to transform your system-to-system transactions into scalable and reliable transactions by decoupling the two sides of the integration. Azure queue service enables you to put messages on the queue and asynchronously process these messages.

There are more complex services for handling messages between systems (like Azure Service Bus or Event Hub) that offers more complex features like event subscriptions, topics and more (maybe I’ll talk about them in other posts) but if you want to saty simple an cheap, Azure queue service is a great choice (you only pay for storage and operations).

The main “disadvantages” of Azure Queues I think are the following:

- No ordering of messages

- No subscribe system (you need to poll the queue to detect new messages, no push mechanisms)

- Maximum message size is 64KB

- Maximum TTL (Time To Leave) is 7 days

but I think that these are not real disadvantages on 99% of your integrations with Dynamics 365 Business Central.

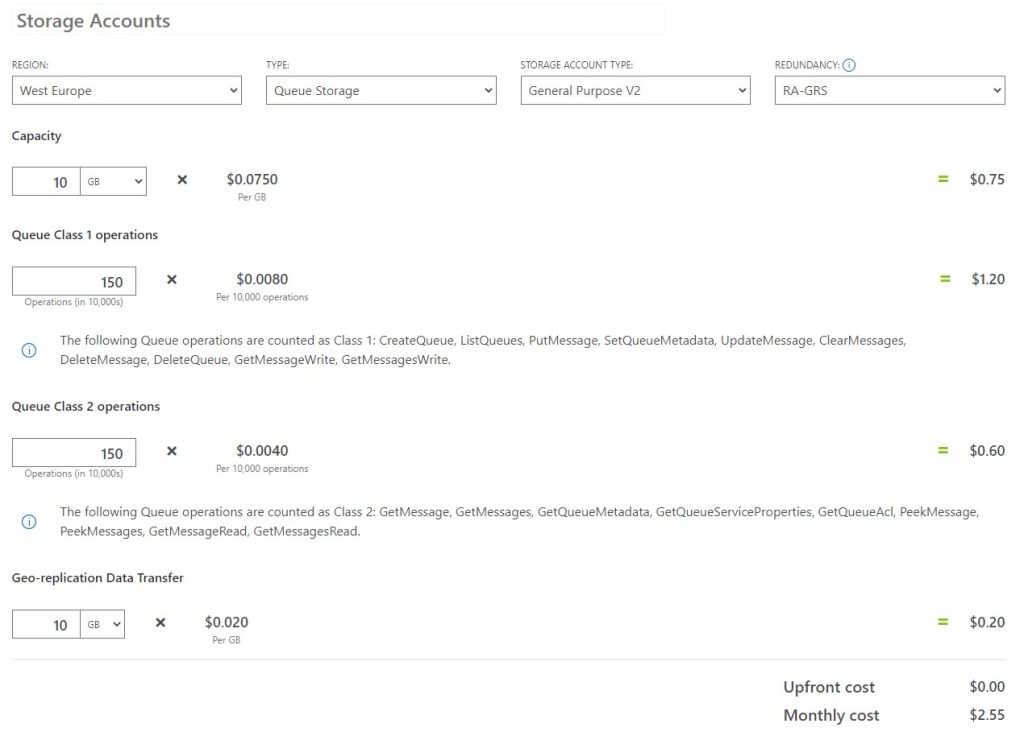

Regarding cost of this service: you only pay for the storage used, and the operations you perform on this queue (in blocks of 10000). As an example, at the time of writing this post a read-access geo-redundant storage (RA-GRS) in Western Europe region costs $0.075 per GB for storage, $0.0080 for 10000 queue class 1 operations, $0,0040 for 10000 queue class 1 operations and $0.020 per GB geo-replication data transfer. Imagine to have the following requirement:

- 10 GB storage for messages

- 5000 messages a day for class 1 operations and 5000 messages a day for class 2 operations

- 10 GB geo-replicated data transfer

The total cost of a queue handling such types of messages will be $2.55 for month. Very cheap!

But how can I use Azure Storage Queues in my Dynamics 365 Business Central integration projects?

For a serverless integration that rocks between Dynamics 365 Business Central and an external application EXTAPP, I suggest to create two queues (one for transactions from BC to EXTAPP and another for transactions from EXTAPP to BC). Each queue contains the appropriate transaction messages for a particular transaction.

The suggested schema is the following:

When Dynamics 365 Business Central needs to send a message (transaction) to the external application (EXTAPP):

- It calls an Http Trigger Azure Function sending the message

- The Azure Function saves a message into the Azure queue

- A Queue Trigger Azure Function starts when a new item is received on a queue. The queue message is automatically provided as input to the function, it’s processed and passed to the external application EXTAPP.

- If the transaction fails, you can handle the exception and re-process the message again.

When the external application (EXTAPP) needs to send a message (transaction) to Dynamics 365 Business Central:

- It calls an Http Trigger Azure Function sending the message

- The Azure Function saves a message into the appropriate Azure queue

- A Timer Trigger Azure Function process the incoming messages with the desired timing and batches (a timer trigger lets you run a function on a schedule) and calls the appropriate Dynamics 365 Business Central APIs with the required operational limits.

- If the transaction fails, you can handle the exception and re-process the message again.

Why a Timer Trigger Azure Function in this second scenario? Simply because in this way you can handle the transactions to Business Central in the way you want. If the external applications send hundreds of messages per seconds, messages are safely placed into the queue and then processed with the timing that Dynamics 365 Business Central requires, avoiding throttling and retry mechanisms.

The messages exchanges on every transactions are based on JSON objects,saved into the queues and then processed as needed.

In an Azure Function app, you can write a message into an existing queue with the following snippet:

public void InsertMessage(string queueName, string message)

{

// Get the connection string from app settings

string connectionString = ConfigurationManager.AppSettings["StorageConnectionString"];

// Instantiate a QueueClient which will be used to create and manipulate the queue

QueueClient queueClient = new QueueClient(connectionString, queueName);

// Create the queue if it doesn't already exist

queueClient.CreateIfNotExists();

if (queueClient.Exists())

{

// Send a message to the queue

queueClient.SendMessage(message);

}

}

A Queue Trigger Azure Functions that polls a queue called d365bc-queue has the following skelethon:

public static class D365BCQueueFunctions

{

[FunctionName("QueueTrigger")]

public static void QueueTrigger(

[QueueTrigger("d365bc-queue")] string myQueueItem,

ILogger log)

{

log.LogInformation($"C# function processed: {myQueueItem}");

}

}

Processing and removing a message from an Azure Queue requires two steps:

- Getting the message with a call to the ReceiveMessages method. The retrieved message becomes invisible to any other code reading messages from this queue.

- Removing the message from the queue by calling the DeleteMessage method.

This two-step process of removing a message assures that if your code fails to process a message due to hardware or software failure, another instance of your code can get the same message and try again.

A sample for this process is the following:

public void DequeueMessage(string queueName)

{

// Get the connection string from app settings

string connectionString = ConfigurationManager.AppSettings["StorageConnectionString"];

// Instantiate a QueueClient which will be used to manipulate the queue

QueueClient queueClient = new QueueClient(connectionString, queueName);

if (queueClient.Exists())

{

// Get the next message

QueueMessage[] retrievedMessage = queueClient.ReceiveMessages();

// Process the message

Console.WriteLine($"Dequeued message: '{retrievedMessage[0].Body}'");

// Delete the message

queueClient.DeleteMessage(retrievedMessage[0].MessageId, retrievedMessage[0].PopReceipt);

}

}

The goal of this post is just giving you an idea on how to handle integrations in a reliable way on cloud. A common question that I receive when talking about these things is: but can I interact with the Azure Queue directly from AL, avoiding the Azure Functions?

The answer is YES. Azure Storage Queue service exposes REST APIs for that and you can call these APIs directly from your extension’s code. I had also in mind time ago to directly propose a pull request to the BC DEV Team for an AL module for interactring with an Azure Queue, need only to find the time to do so and maybe we could have it embedded into the Base App. Do you think it could be interesting?

But despite this: do you really need to handle this always in AL? You can do that if you want, but it does not mean that you should do that! Handling the integrations via Azure Functions permits you to have extreme scalability and more. In big integrations where exchanged messages must be reliable and you have lots of events, using Function apps is recommended.

I don’t think this post is finished, I probably provide a real sample of such type of integration, but I hope it will give you some hints on improving your integrations with your favourite ERP 😉

P.S. I hope also to have answered to the questions received via socials in the last days… if not, please write me.

Back to Nav 5 with CommercePortal + MSMQ integration 🙂

LikeLike

We tried to use the BC Connector to push some data into another system. Did you now that the BC Connector doesn’t support Collections in the webhook? So, if someone changes the price e.g. on all Items BC creates a collection post instead of a single post. But the connector cannot handle this. There is also a undocumented limitation of 300 calls / minute. In the end this mean we lost records. Ther is no notification or possibility to get notified if this happens.

We tried to use a HTTP trigger instead of using the BC Connector for outgoing calls. But if the number of calls is very high the queue begins to get unbelievable slow. The solution was to create an azure function. Now we where able to send 100’000 of records without any issue.

LikeLike

Thanks for this feedback. I absolutely agree with you, some out of the box tools simply are not ready to scale or to well manage big number of transactions. Azure Function is absolutely my preferred technology, Azure Queues are useful for decoupling systems. I spent lots of time saying to AL developers to avoid doing all with standard tools, feedbacks like yours are very valuable. 👍🏻

LikeLike

The last time I tried to use a BC webhook to notify an Azure Function didn’t give good results. Azure Functions in consumption mode have a warmup after a certain period of inactivity. When BC wants to notify a “sleeping” function it will timeout before it gets an answer. That call will simply be lost and there is no way to know it happened. That kind of solution is totally unreliable in most case. I don’t remember the last time a customer told me that it doesn’t matter if some messages get lost.

Can you give more detail about the method you use from BC to Azure Function ?

LikeLike

You’re absolutely right. Azure Functions in consumption plan have cold start. I usually avoid this using Azure Application Insights availability tests. You can create a basic URL ping test, which will make an HTTP call every 5 to 10 minutes, depending on what you prefer. These ping tests are quite basic but also free!

Other way: create a timer trigger Logic app that pings your function url every 5 minutes.

The other way is obviously using Premium functions.

LikeLike

You mentioned: “There are more complex services for handling messages between systems (like Azure Service Bus or Event Hub) that offers more complex features like event subscriptions”. I would like to implement this in a project I’m working on where an External application sends a message to an Azure Service Bus with a Session ID and expects a response with the same Session ID (request response pattern). I know it’s possible to have an event subscription on this in Azure functions and make the Azure Function call a Business Central API and do some business logic which in turn sends a message to the Azure Service Bus with the same Session ID.

I was wondering if it was possible to cut out the Azure Function and have the event subscription in Business Central. In general is it possible to have an external event subscription in Business Central? I’ve found nothing on the subject (just External Business Events). If this is indeed possible I am a little worried about how to control the load on Business Central

LikeLike