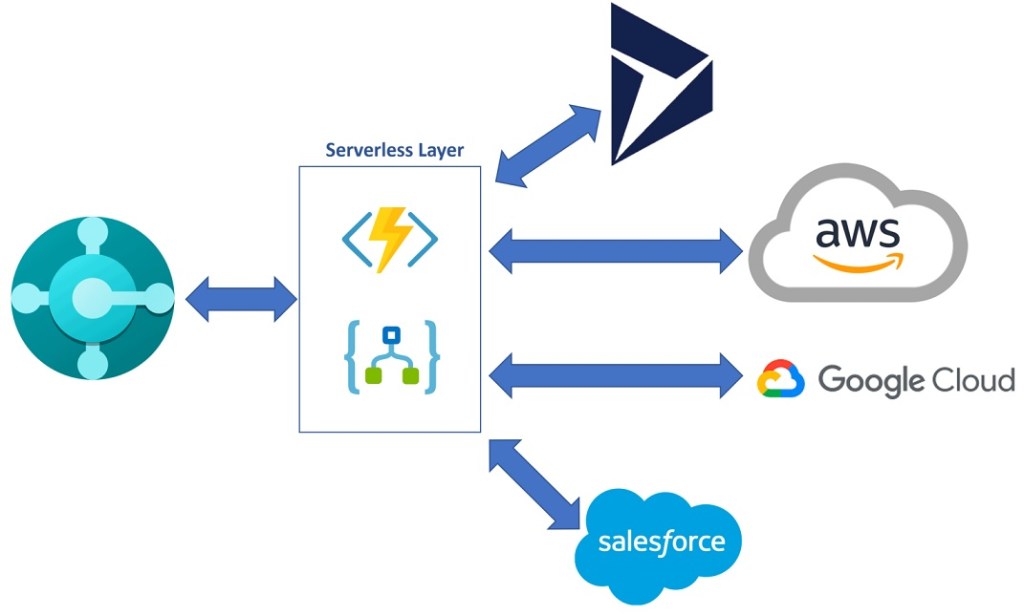

In these days, for a big cloud-based project, I had the need to conduct an assessment of the different cloud services in use. In this project we have Dynamics 365 Business Central SaaS and other different cloud applications (also on different cloud providers) connected together by using serverless processes, like in the following schema:

For this project, we have a serverless layer (hosted on Azure) where different cloud services (Azure Functions, Azure Logic Apps, Azure Service Bus etc) are used for the communication between the ERP (Dynamics 365 Business Central) and the other cloud applications. These types of interactions are mainly API calls, data processing and message exchangements between the various cloud apps.

One of the assessment’s requirements was to measure the performances and extracting various benchmarks of the different cloud processes in use. I would like to measure how each endpoint of my serverless layer responds, in order to discover if I have some services with poor performances that can be improved or handled differently.

To do that, Azure Application Insights was not enough for me mainly because not all these services are managed by me or are not on a subcription that we manage. So, how to do a general service test with an external tool that gives me performance metrics?

I had an idea: in the past I’ve used BenchmarkDotNet to do benchmarking of .NET applications. Why not using this tool also for cloud services?

BenchmarkDotNet is a famous tool for benchmarking .NET applications. It helps you to transform methods into benchmarks, track their performances and share reproducible measurement experiments. A benchmark is (in simple terms) a test that can be executed to know if a modification in a piece of code has improved, worsened or not affected its performances. BenchmarkDotNet library transforms the methods used in your application into benchmarks and enables you to share reproducible measurement experiments.

Once the benchmarking process gets executed, a summary of the results will be displayed at the console window, with detailed informations related to the application’s performance. You can also use the R engine to display charts on different metrics.

How to use BenchmarkDotNet to monitor a cloud service?

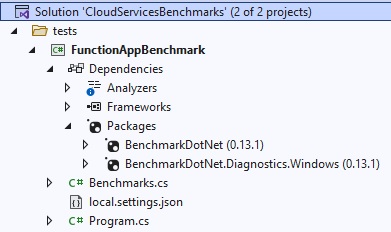

To start creating my benchmarking project, I’m using Visual Studio. Here you can create a new console application and install the BenchmarkDotNet NuGet packages. Here is my project structure:

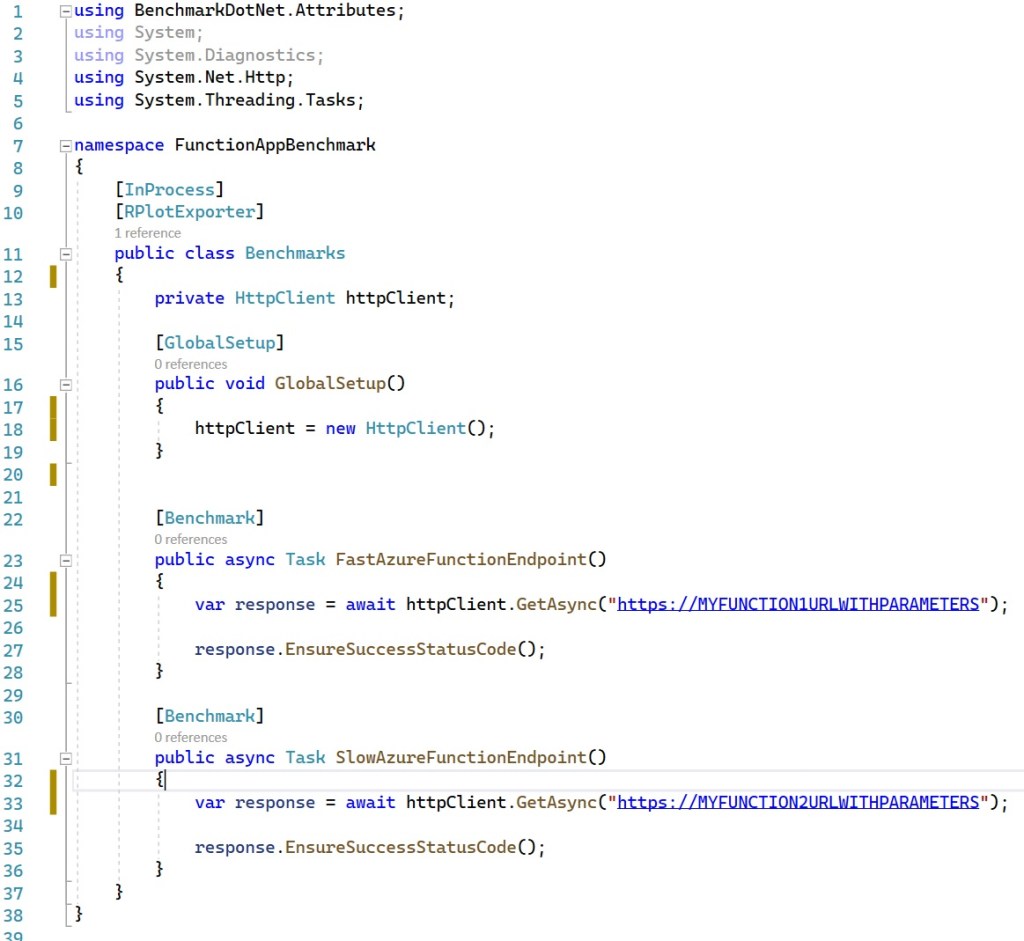

Then in your project you can write a class with methods that you want to measure and mark them with the Benchmark attribute. Here is how my benchmark class (file Benchmark.cs) looks like (I’ve simplyfied it):

The FunctionAppBenchmark class has a method marked with a GlobalSetup attribute. A method which is marked by the [GlobalSetup] attribute will be executed only once per a benchmarked method after initialization of benchmark parameters and before all the benchmark method invocations. This is where I need to do my initializations and in this case I’m declaring an HttpClient object for performing HTTP calls to my cloud endpoints.

Then, the class contains two test methods, marked with the [Benchmark] attribute. These methods here are responsible for calling a particular endpoint (cloud service) and retrieve the results. These are the methods that contains my tests.

BenchmarkDotNet supports also the usage of Exporters. An exporter allows you to export results of your benchmarks in different formats. By default, files with results will be located in .\BenchmarkDotNet.Artifacts\results directory. Default exporters are: csv, html and markdown, but other more advanced exporters like XML, JSON and Plots can be configured.

When you execute tests, BenchmarkDotNet can also generate charts by using the R programming language to plot the results from the generated *-measurements.csv file (file that contains the output of the tests).

In this project, I’m using the RPlotExporter in order to have charts related to the performances of my monitored services (see the attribute [RPlotExporter] of the Benchmarks class).

To do that, you need to:

- Get the latest version of R for your OS and install it.

- Add the R’s \bin\ directory to the PATH system environment variable (for example on my machine it’s C:\Program Files\R\R-4.2.0\bin)

- Restart Visual Studio if open (in this way it reloads the updated PATH variable).

NOTE: if you have the RPlotExporter couldn’t find Rscript.exe in your PATH and no R_HOME environment variable is defined error, this is because you don’t have completed the above mentioned point 3.

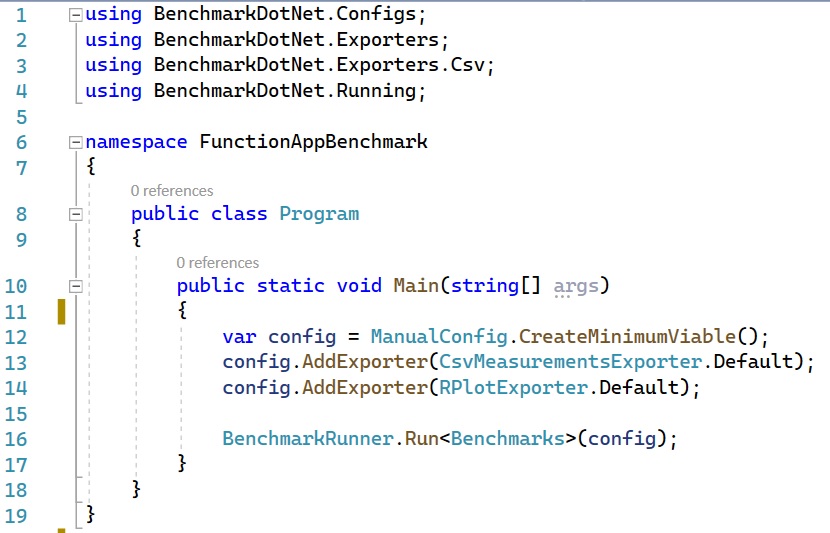

In the Program.cs file I have the Main method of my Console app. The Main method of the console application calls the BenchmarkRunner.Run method that runs your benchmarks and print results to console output.

The Program.cs file is defined as follows:

Here I’m declaring a configuration with the proper exporters for generating the plots and then I call the BenchmarkRunner.Run method by passing the Benchmarks class with its configuration.

To execute the benchmarks, you need to compile the Benchmarks class and then call the console app. To do that, first build the project in Release mode:

dotnet build -c Release

Please remember that you should use only the Release configuration of your project for your benchmarks, otherwise, the results will not correspond to reality. If you forgot to change the configuration, BenchmarkDotNet will print a warning.

Then, start the console application to execute the benchmarks. Accordingly to my project path and structure, the command is the following:

dotnet .\tests\FunctionAppBenchmark\bin\Release\netcoreapp3.1\FunctionAppBenchmark.dll

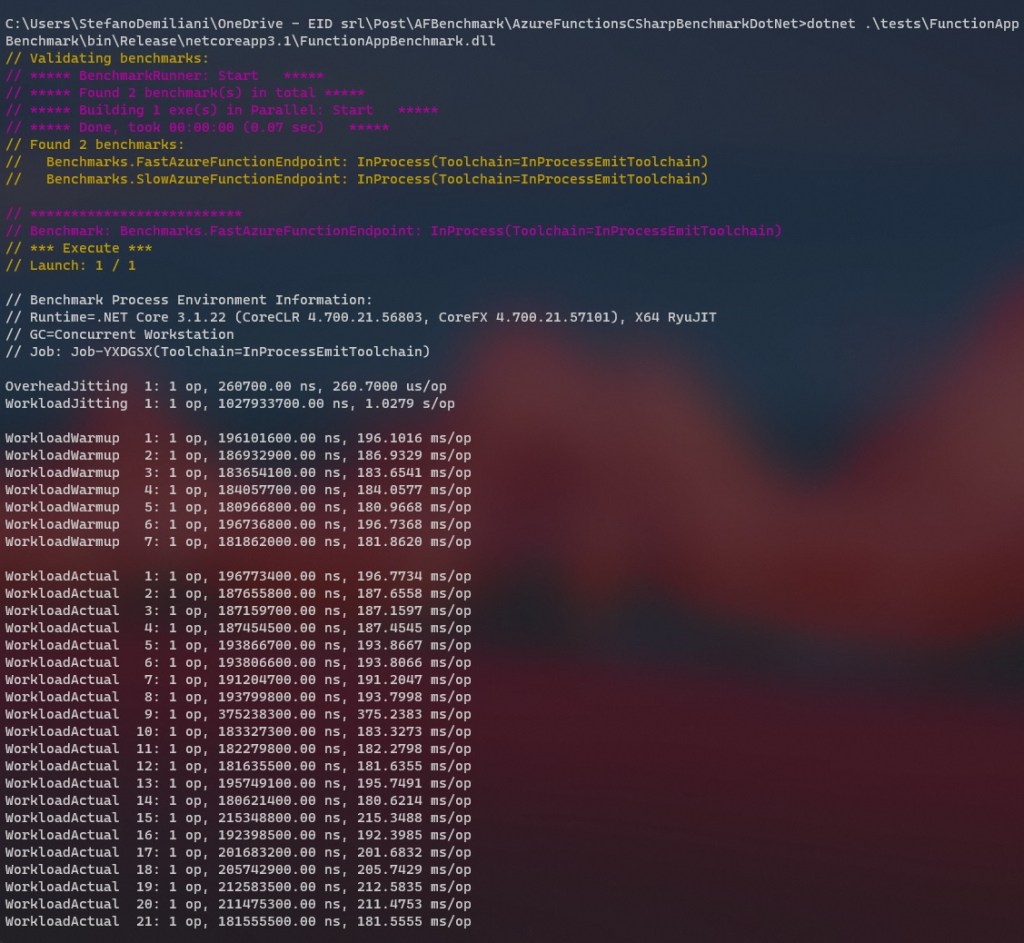

The benchmarks execution starts:

Reading the benchmarks results

The tool gives you benchmarks output in the console at first. Here is the result I have:

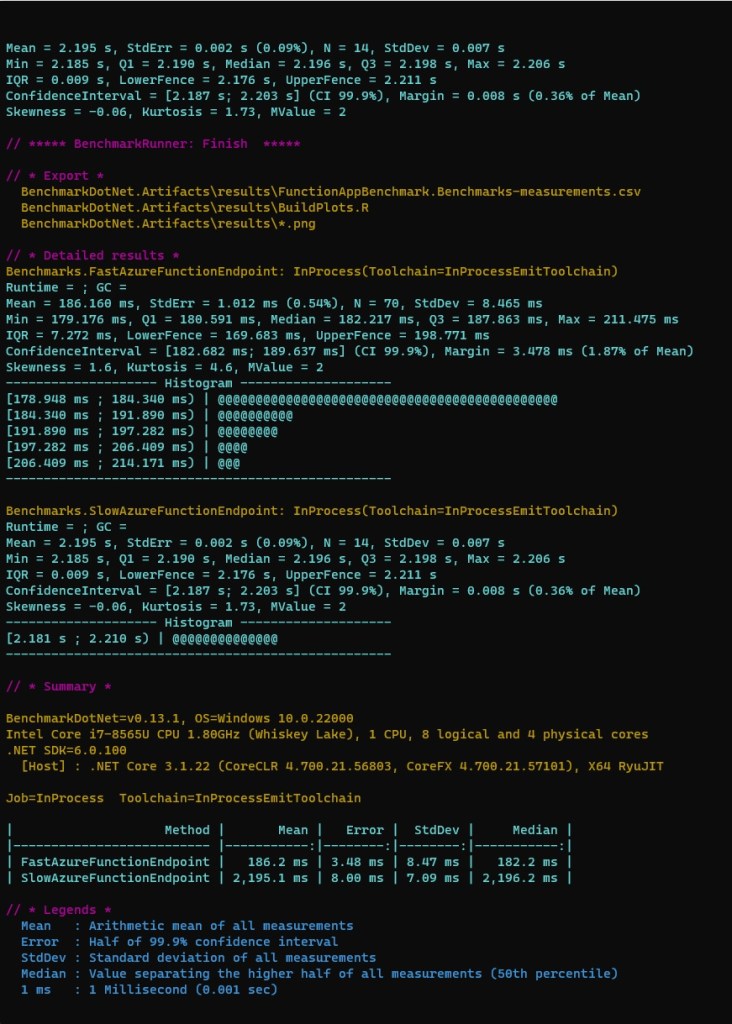

The benchmark results are also output to the following artifact directory: \bin\Release\netcoreapp3.1\BenchmarkDotNet.Artifacts\results\

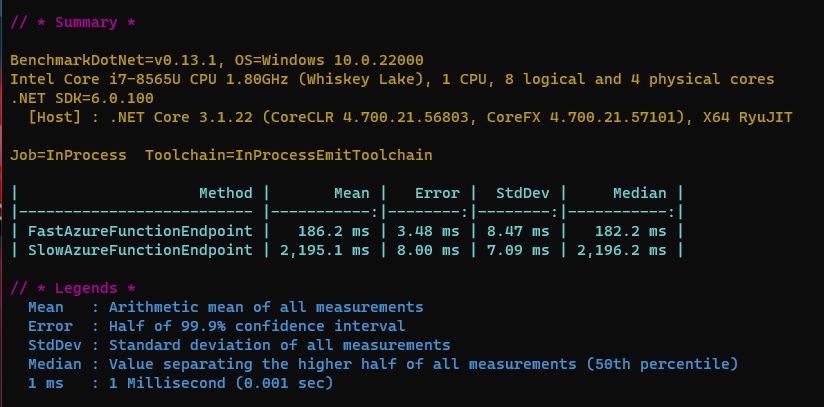

The most interesting part to read I think it’s the summary part:

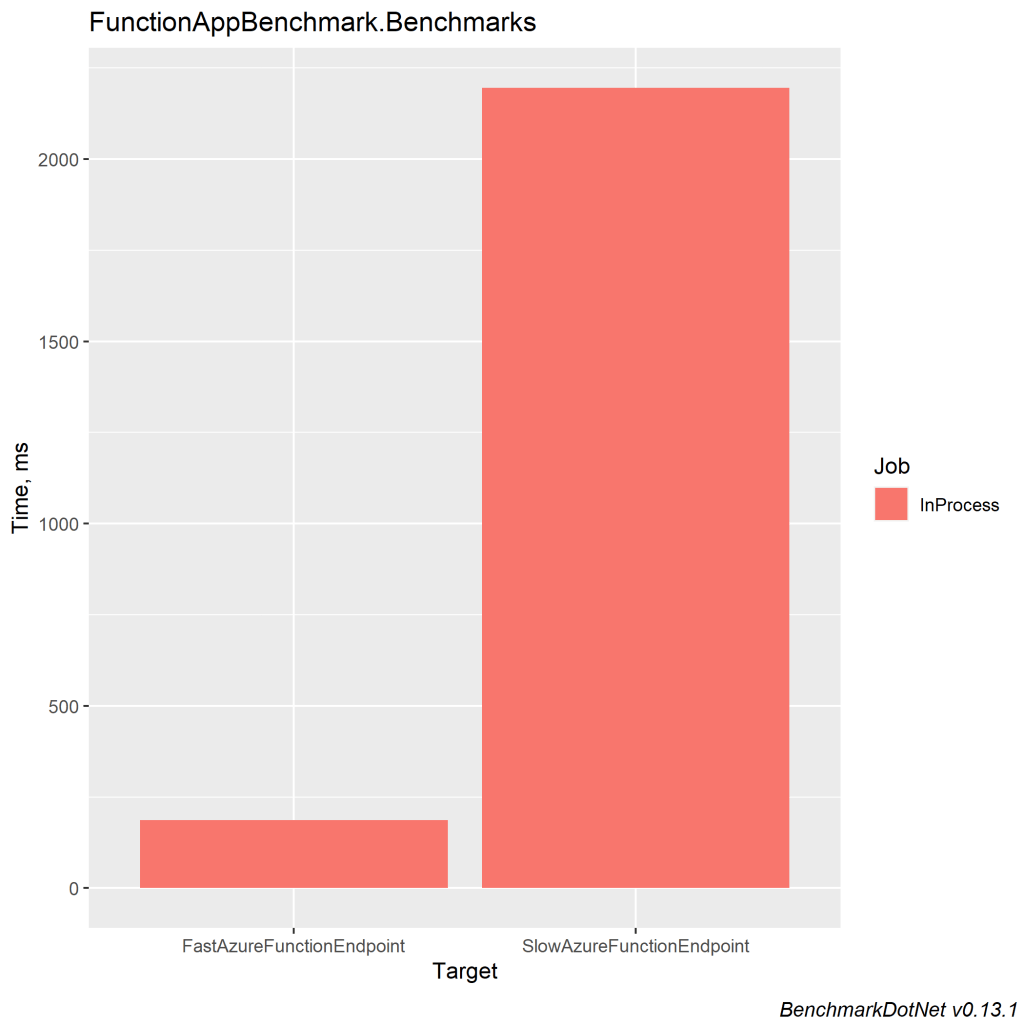

Here you can immediatly see the response time of your endpoint. From here I can see that the FastAzureFunctionEndpoint takes 186.2 ms to respond while the SlowAzureFunctionEndpoint takes 2195.1 ms to respond. Maybe something to work here?

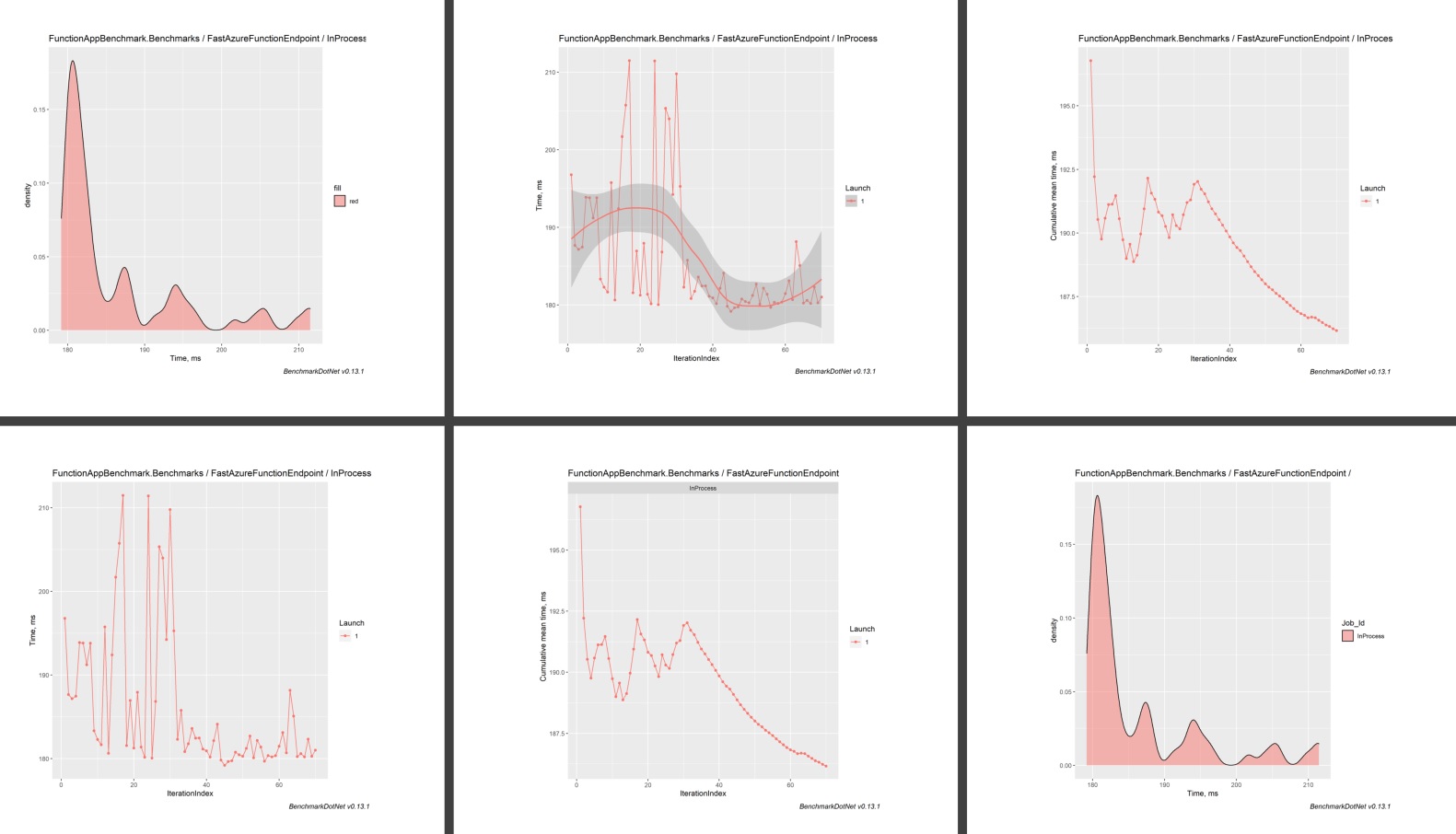

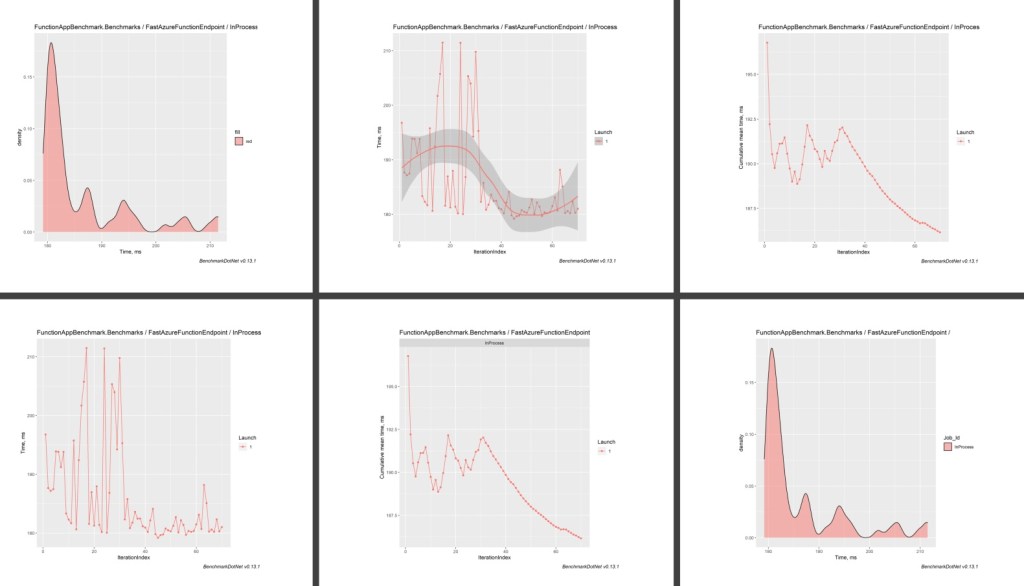

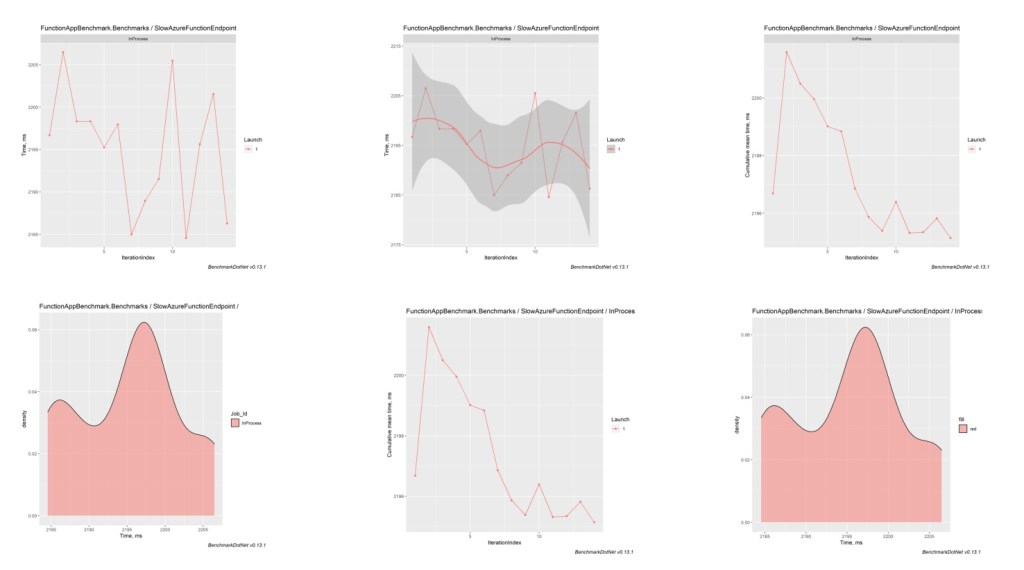

If you have enabled the R exporter, in the \bin\Release\netcoreapp3.1\BenchmarkDotNet.Artifacts\results\ folder you will have also interesting charts, like the following:

Metrics for the FastAzureFunctionEndpoint endpoint:

Metrics for the SlowAzureFunctionEndpoint endpoint:

As you can see, this tool could be handy if you have external applications that talk with other cloud services or that connect with Dynamics 365 Business Central APIs and you want to monitor the performances of these connections (methods). By starting a general benchmark of all your endpoints, you can have a complete monitoring and overview of your cloud services involved in the project.

Useful for you? Not sure, but for me it saves my day 🙂