Deploying Microsoft Dynamics NAV to the Azure cloud (IaaS) is now one of our first proposal on every new customer. When you have a customer that has embraced the cloud, it’s quite natural to start thinking on “going serverless” when implementing certain business processes.

In this post, I want to describe a real world scenario I’ve recently handled in a “serverless” way. The scenario is this: our customer has NAV deployed on Azure (IaaS). An external business partner actually collects orders from many sites and at the end of the day it uploads a ZIP archive containing a big CSV file with orders details to an FTP server shared by the headquarter. This CSV file must be parsed and then imported in NAV for orders creation.

How to handle it in a “serverless” way?

We’re on the cloud, so the natural idea was to using Azure Blob Storage for uploading the ZIP archive and then Azure Functions for performing the business operations. A custom Azure function checks for the ZIP file and then:

- It extracts the CSV from the ZIP file

- It parses the CSV file

- It loads the data into a table in the Azure SQL database by using SqlBulkCopy

- It calls a published NAV web service for automatic order creation (optional)

This is a diagram of the architecture:

In a previous post, I’ve talk about developing Azure Functions directly via the Azure Portal and using custom DLLs from that function. The Azure Function that handles the scenario described in this post is instead developed directly by using Visual Studio 2017 and the Visual Studio Tools for Azure Functions.

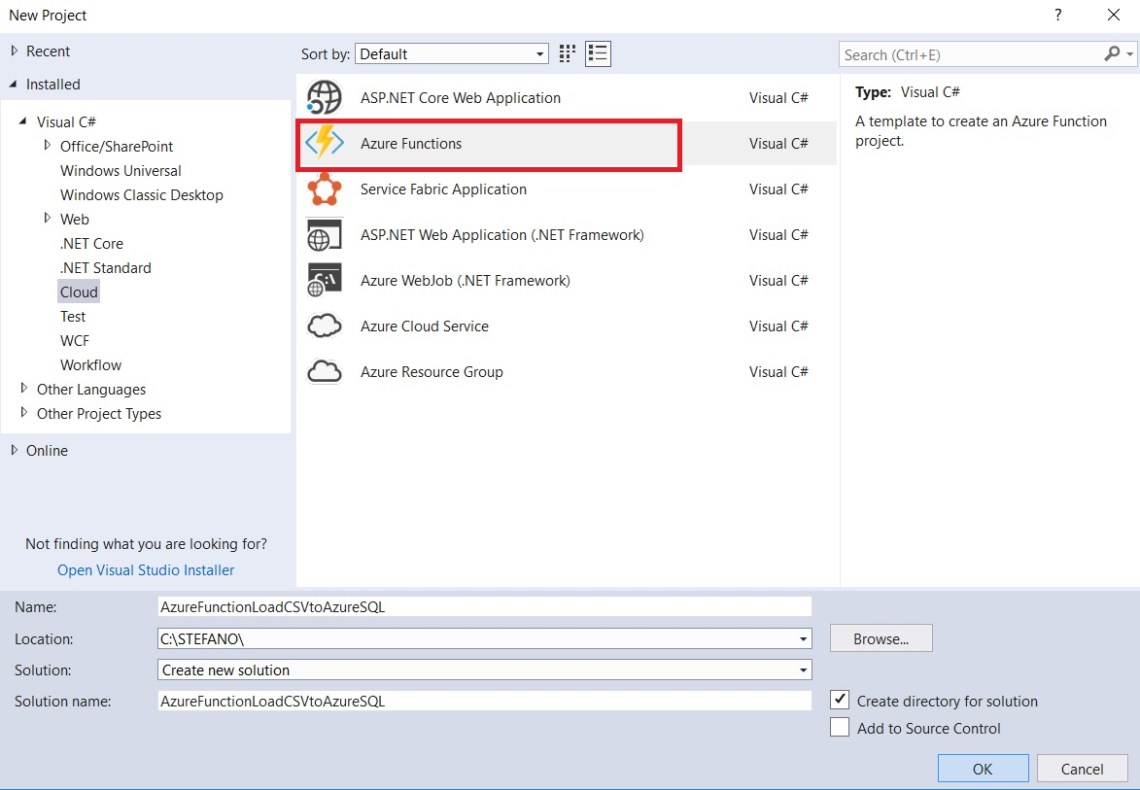

Let’s start by creating a new Azure Function project from Visual Studio:

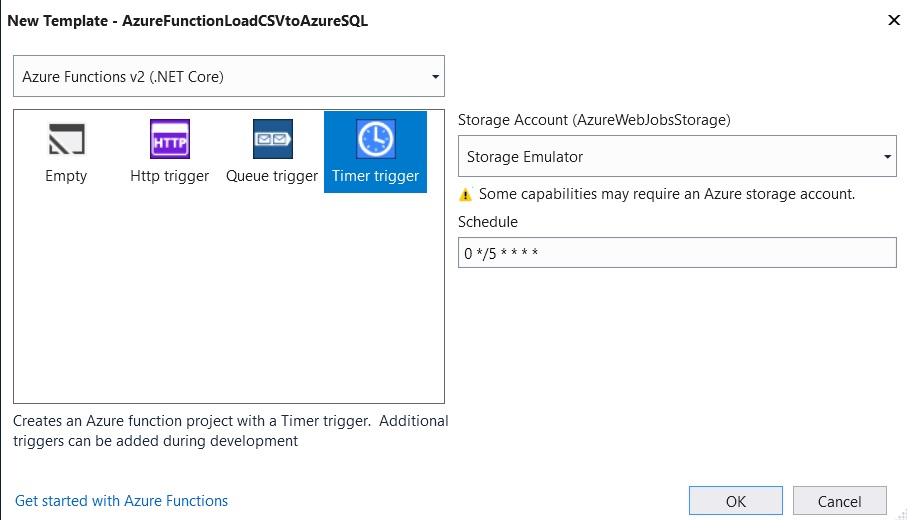

In the Azure Function template window, select Timer Trigger (because here we want a function that is triggered periodically, but obviosuly this could be changed as needed):

Here, you’ve to select the Storage Account (you can use the emulator while developing) and the Schedule (default is 5 minutes but you can change it as you want).

Now the Azure Function project is created. Here, we need to add some dependencies (added via NuGet packages). First, we need a reference to the WindowsAzure.Storage library (for working with Azure Blob Storage):

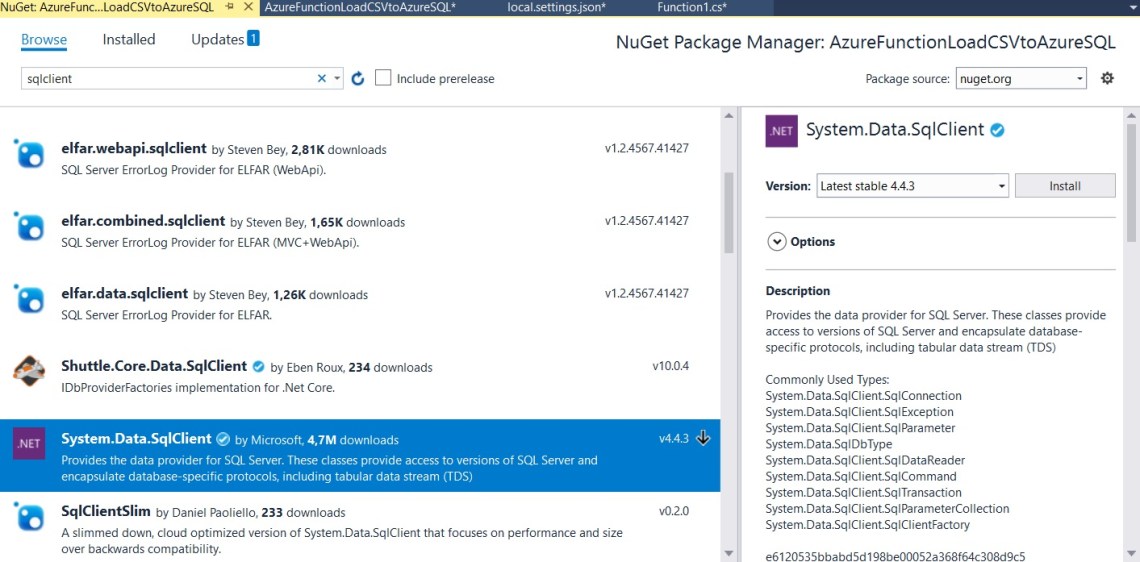

and then we need a reference to the System.Data.SqlClient library for working with Azure SQL:

After adding the references, we can implement our function code inside Visual Studio. The function template has a Run method with a TimerTrigger parameter. Our main function code is as follows:

[FunctionName("LoadCSVZipFileToAzureSQL")]

public static void Run([TimerTrigger("0 */5 * * * *")]TimerInfo myTimer, TraceWriter log) //Runs every 5 minutes

{

log.Info($"C# Timer trigger function executed at: {DateTime.Now}");

log.Info("Downloading ZIP archive from Azure Storage...");

ReadBlobStorage();

log.Info("ZIP archive downloaded successfully.");

log.Info("Extracting ZIP archive...");

var d = Directory.CreateDirectory($"{Path.GetTempPath()}\\orders_unzip");

Directory.Delete(d.FullName, true);

d = Directory.CreateDirectory($"{Path.GetTempPath()}\\orders_unzip");

ZipFile.ExtractToDirectory($"{Path.GetTempPath()}\\orders.zip", d.FullName);

log.Info("ZIP archive extracted.");

var filename = d.GetFiles("*.csv")[0].FullName;

log.Info($"CSV filename: {filename}");

DataTable sourceData = CreateDataTable(filename);

log.Info($"Data loaded from CSV");

log.Info("Writing data to Azure SQL + NAV...");

//Write the data to NAV

WriteToNAV(sourceData);

log.Info("Process terminated successfully");

}

With this code, every 5 minutes our function checks the Blob Storage for the ZIP file (via the ReadBlobStorage function). If found, it extracts the file in a temporary folder, parses the CSV, loads the data in a DataTable (via the CreateDataTable function)) and then calls the WriteToNAV function for saving the data in the NAV database.

The ReadBlobStorage method is defined as following:

private static void ReadBlobStorage()

{

var storageAccount = CloudStorageAccount.Parse(ConfigurationManager.AppSettings["AzureWebJobsStorage"]);

var blobClient = storageAccount.CreateCloudBlobClient();

var container = blobClient.GetContainerReference("order_container");

var blob = container.GetBlockBlobReference("Orders.zip");

blob.DownloadToFileAsync($"{Path.GetTempPath()}\\orders.zip", FileMode.CreateNew);

}

The CreateDataTable method is defined as follows:

private static DataTable CreateDataTable(string filename)

{

DataTable csvData = new DataTable();

csvData.Columns.Add("OrderNo");

csvData.Columns.Add("CustomerNo");

csvData.Columns.Add("ItemNo");

csvData.Columns.Add("Quantity");

using (var rd = new StreamReader(filename))

{

while (!rd.EndOfStream)

{

var fields = rd.ReadLine().Split(',');

csvData.Rows.Add(fields[0], fields[1], fields[2], fields[3]);

}

}

return csvData;

}

The WriteToNAV method takes in input the DataTable loaded from the CSV file and

- stores the data in an Azure SQL table using SqlBulkCopy (optimized for bulk insert)

- Calls an external class (DLL) where the connection with a NAV web service is managed)

The method code is as follows:

private static void WriteToNAV(DataTable data)

{

SqlBulkCopy bcp = new SqlBulkCopy(ConfigurationManager.AppSettings["AzureSQLConnectionString"]);

bcp.DestinationTableName = "External_Orders";

bcp.WriteToServer(data);

//Call NAV WS

NAVWSDAL.NAVWSDAL d = new NAVWSDAL.NAVWSDAL();

d.CallNAVWS(data);

}

Few words about point 2 above: in my Visual Studio project, I’ve created a Class Library project called NAVWSDAL. Here, I have a reference to a NAV web service. This class library has a public method CallNAVWS that taked a TableData in input and calls the NAV ws. This is the definition:

public class NAVWSDAL

{

public void CallNAVWS(DataTable data)

{

NAVWS ws = new NAVWS();

ws.Url = serviceUri;

ws.Credentials = new System.Net.NetworkCredential(WS_User, WS_Pwd, WS_Domain);

foreach (DataRow row in data.Rows)

{

//Read the Order fields and call a NAV WS codeunit

string result = ws.InsertOrder(row["OrderNo"].ToString(),row["CustomerNo"].ToString(),row["ItemNo"].ToString(),Convert.ToDecimal(row["Quantity"]));

}

}

}

In our Azure function code, there’s a reference to this class library. In this way, when we deploy the Azure Function also the external DLL will be automatically deployed on Azure.

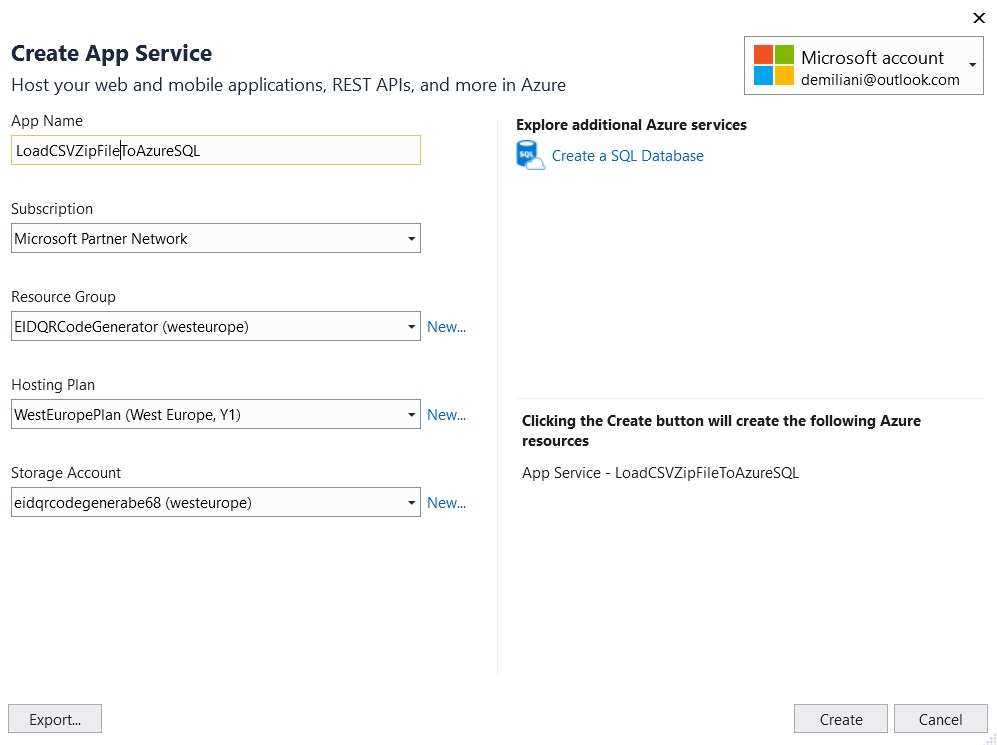

To deploy the Azure Function, in Solution Explorer, right-click the project and select Publish. Choose Create New and then Publish.

In the Create App Service window, we need to set the App name, select our Azure subscription, the Resource Group (Name of the resource group in which to create your function app), the Hosting Plan (create a Consumption plan in your region) and the Storage account:

After that, click Create. Visual Studio now will deploy your Azure Function in your Azure subscription. When deployed, you’ll have a public function url.

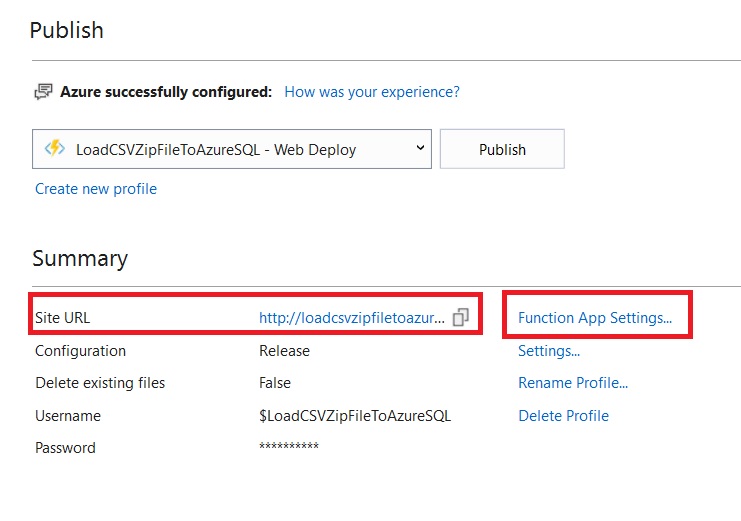

Any settings you’ve added in the local.settings.json file must be also added to the function app in Azure (these settings are not uploaded automatically when you publish the project). The easiest way to upload the required settings to your function app in Azure is to use the Function App Settings link :

Here you can create all your settings used by your Azure Function:

P.S. I’ve managed here the connection string with the Blob Storage and Azure SQL, there could be for sure improvements on this.

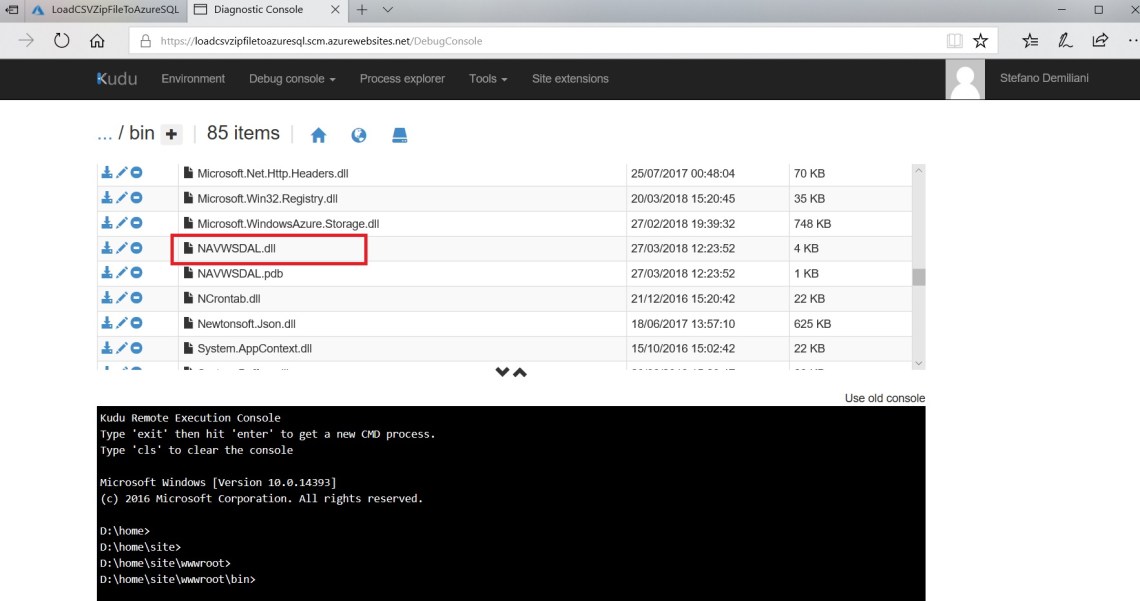

If you open a new browser window and type the url https://<yourfunctionname>.scm.azurewebsites.net (as I’ve described in a previous post) and from here yoy open the Kudu console and navigate to the BIN folder, you can see that Visual Studio has deployed for you all the external DLLs referenced by the project, included our custom DLL:

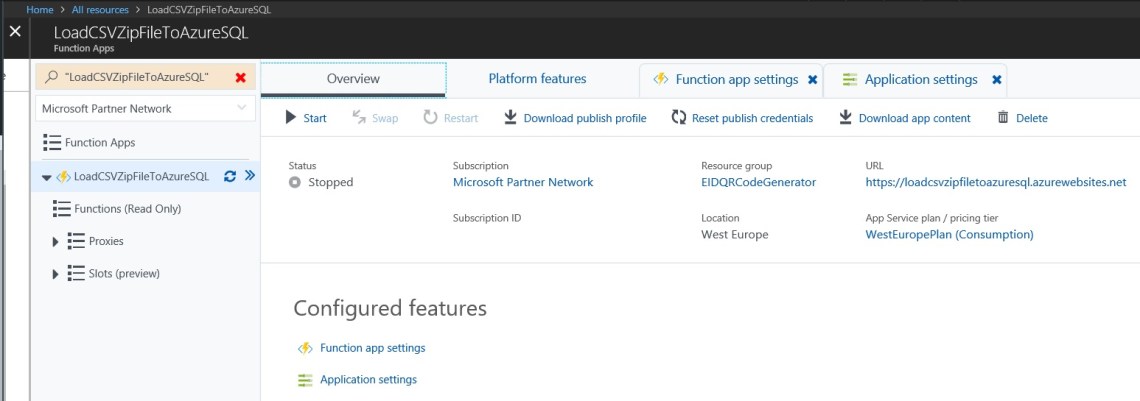

You function is now ready to go and can be managed (activated, stopped scale in/out the app service and so on) directly from the Azure Portal:

This sample code is available on my GitHub page. Happy “serverless” with NAV 🙂

Hello – i tried to reproduce but did not succeed because there is no information about the object behind the web service. Tried with codeunit – soap – not working. Could you please explain a little bit more your example ?

LikeLike

Hi Gustav. The source code is on GitHub. In this post I’ve only explained the Azure Function implementation. The code that saves the data to NAV is a simple call to a SOAP web service (or an OData endpoint).

LikeLike

Hi..

Thanks for sharing great knowledge. But, one other scenario is i want to extract file from 365 BC SaaS (pure cloud) and with azure logic need to transfer that file to ftp folder.

My concern is how we can extract a file from BC and store it to Azure Blob? So, after that i can use the azure function to take the file and move to FTP folder.

Kindly share your knowledge via email moh_rizkimaulana@live.com

Best Regards,

Rizki

LikeLike

Rizki, did you find any solution for exporting to Azure storage from BC cloud?

LikeLike

I’ve provided a full example on this (exporting/importing D365BC data to a file on Azure Storage by using Azure Functions from AL) on our upcoming book. Code is on GitHub here:

https://github.com/PacktPublishing/Mastering-Microsoft-Dynamics-365-Business-Central/tree/master/Chapter%2013

LikeLike